Understanding Machine Learning Bias and Its Legal Implications

As consumer AI tools like ChatGPT gain popularity, concerns about bias in machine learning (ML) are growing. These biases can lead to more than user frustration—they can spark legal disputes. A software expert witness or AI expert witness may be called upon to analyze these issues in court. This article explores machine learning bias, its legal risks, and how developers can mitigate them.

What Is Machine Learning Bias?

Machine learning bias occurs when ML systems produce unfair outcomes, often reflecting societal biases related to race, gender, age, or culture. For instance, AI art tools like Stable Diffusion or Midjourney have faced criticism for generating offensive imagery, such as depictions of Black individuals based on racist stereotypes. These incidents, often shared on platforms like X, can harm a company’s reputation and prompt legal scrutiny, requiring testimony from an AI expert witness.

A notable example involves the PortraitAI art generator, where users of color reported inaccurate AI-generated portraits. The issue stemmed from training data dominated by Renaissance-era paintings of white Europeans, highlighting the need for diverse datasets. A software expert witness might analyze such cases to identify flaws in algorithm design or data selection.

Types of Machine Learning Bias

There are three primary types of bias in ML:

- Algorithmic Bias: Flaws in the AI model’s design introduce bias.

- Data Bias: Training data lacks diversity or contains prejudiced patterns.

- Societal Bias: Developers’ blind spots, shaped by societal norms, lead to unrecognized biases in algorithms or data.

AI systems are built by humans, so they often inherit human biases. An AI expert witness can help courts understand these technical nuances, clarifying how bias manifests in ML systems.

Legal Implications of Machine Learning Bias

Bias in ML can lead to legal challenges, particularly under anti-discrimination laws. For example, Google faced backlash for its advertising system, which allowed advertisers on Google and YouTube to exclude people of “unknown gender” (e.g., nonbinary or transgender individuals). This violated federal anti-discrimination laws, as reported by Wired. A software expert witness could evaluate the algorithms behind such systems to determine compliance with legal standards.

In the U.S., regulation of AI bias is limited but evolving. The American Bar Association issued a resolution in 2024 urging the legal profession to address ethical and legal issues in ML. The EU’s General Data Protection Regulation (GDPR) offers some oversight, but gaps remain. The AI Now Institute advocates for stricter AI regulation in sensitive areas like criminal justice and healthcare. A 2022 paper in AI and Ethics proposed a legally binding framework to address ML bias, suggesting independent bodies with prosecution powers and significant fines to deter violations.

Microsoft’s Brad Smith has called for impartial testing groups to evaluate AI systems, such as facial recognition, for accuracy and bias, as noted in a Reuters article. An AI expert witness might testify on the fairness of such systems in legal proceedings.

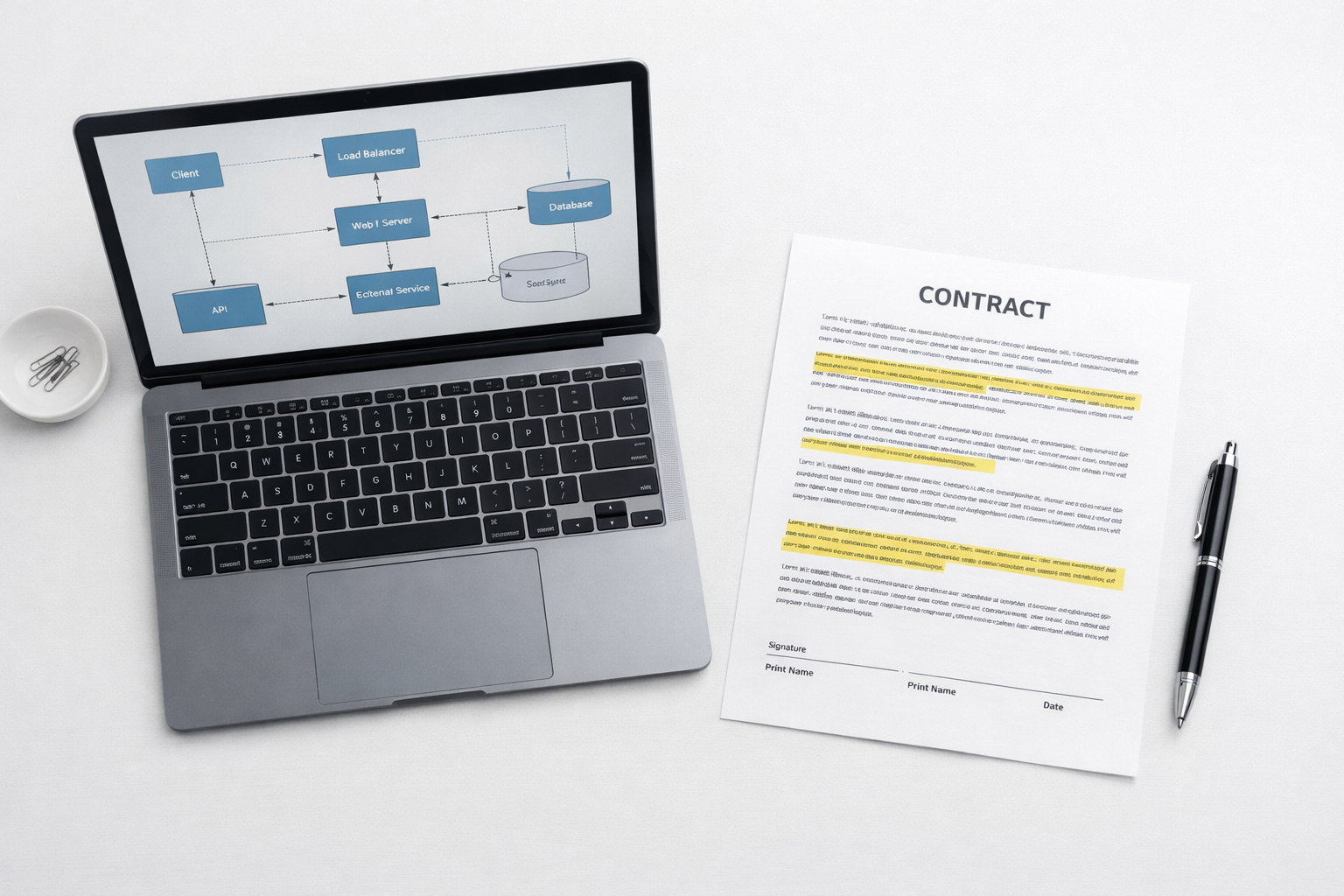

How Can AI Developers Minimize Legal Risks?

To reduce legal exposure, AI developers and businesses can take proactive steps, guided by insights from an AI expert:

- Understand Protected Classes: In the U.S., protected classes include age, race, gender, religion, disability, and ethnicity. Algorithms must be designed and audited to ensure fairness across these groups. Regular audits can identify biases before they lead to legal issues.

- Ensure Diverse Training Data: Underrepresentation in training data often causes bias. For example, Google’s algorithms once misidentified Black individuals as gorillas due to limited diverse data, as reported by The Guardian. Similarly, voice assistants like Siri struggle with accents when training data lacks variety. Including diverse data mitigates these risks.

Conclusion

Addressing bias in machine learning is critical from both legal and business perspectives. Companies must ensure their algorithms treat protected classes fairly to avoid lawsuits and reputational damage. Consulting a software expert witness or AI expert witness can help identify and resolve bias issues. Sidespin Group offers expertise to implement processes for fair and compliant ML systems. For more on AI regulation, visit xAI’s API page for developer resources.

Written by

Related Insights

Discuss your Case

- info@sidespingroup.com

- (800) 510-6844

- Monday – Friday

- 8am – 6pm PT

- 11am – 9pm ET